Problems tagged with "forward pass"

Problem #125

Tags: lecture-11, forward pass, quiz-06, neural networks

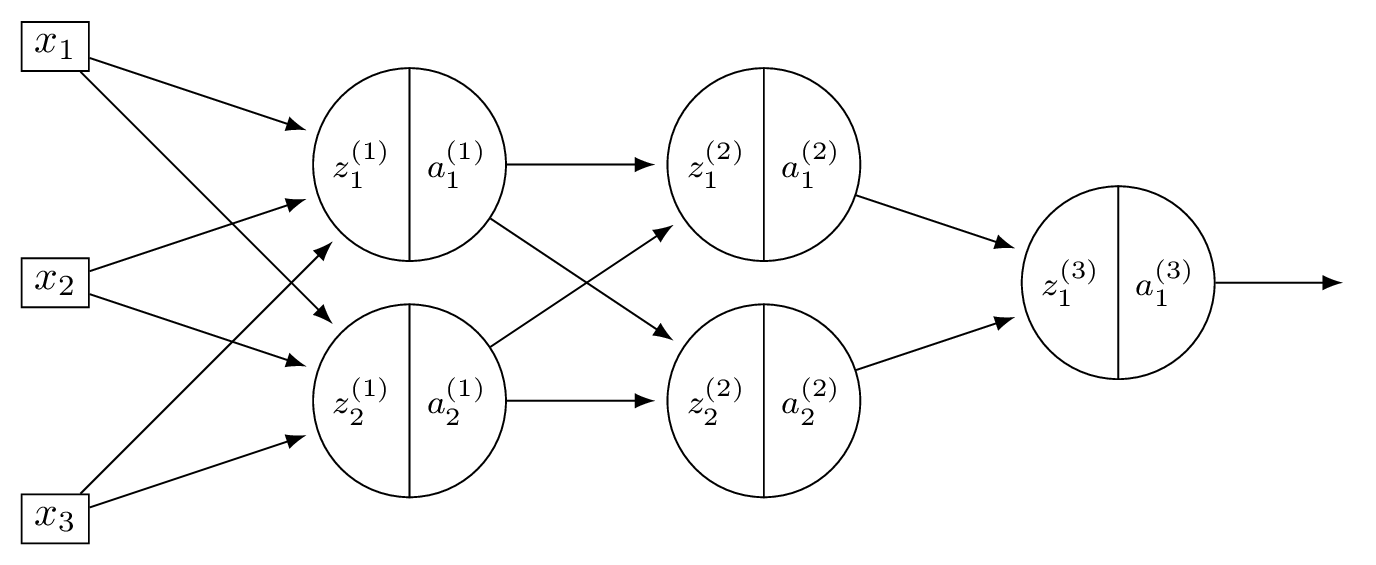

Consider a neural network \(H(\vec x)\) shown below:

Let the weights of the network be:

Assume that all nodes use linear activation functions, and that all biases are zero.

Suppose \(\vec x = (2, 0, -2)^T\).

Part 1)

What is \(a_1^{(1)}\)?

Solution

With linear activations, \(a = z\) at every node.

\(z_1^{(1)} = 3(2) + (-1)(0) + (-1)(-2) = 8\), so \(a_1^{(1)} = 8\).

Part 2)

What is \(a_2^{(2)}\)?

Solution

Layer 1: \(\vec a^{(1)} = (8, -4)^T\). Layer 2: \(z_2^{(2)} = 1(8) + 2(-4) = 0\), so \(a_2^{(2)} = 0\).

Part 3)

What is \(H(\vec x)\)?

Solution

Layer 2: \(\vec a^{(2)} = (36, 0)^T\). \(H(\vec x) = 2(36) + (-3)(0) = 72\).

Problem #126

Tags: neural networks, quiz-06, activation functions, forward pass, lecture-11

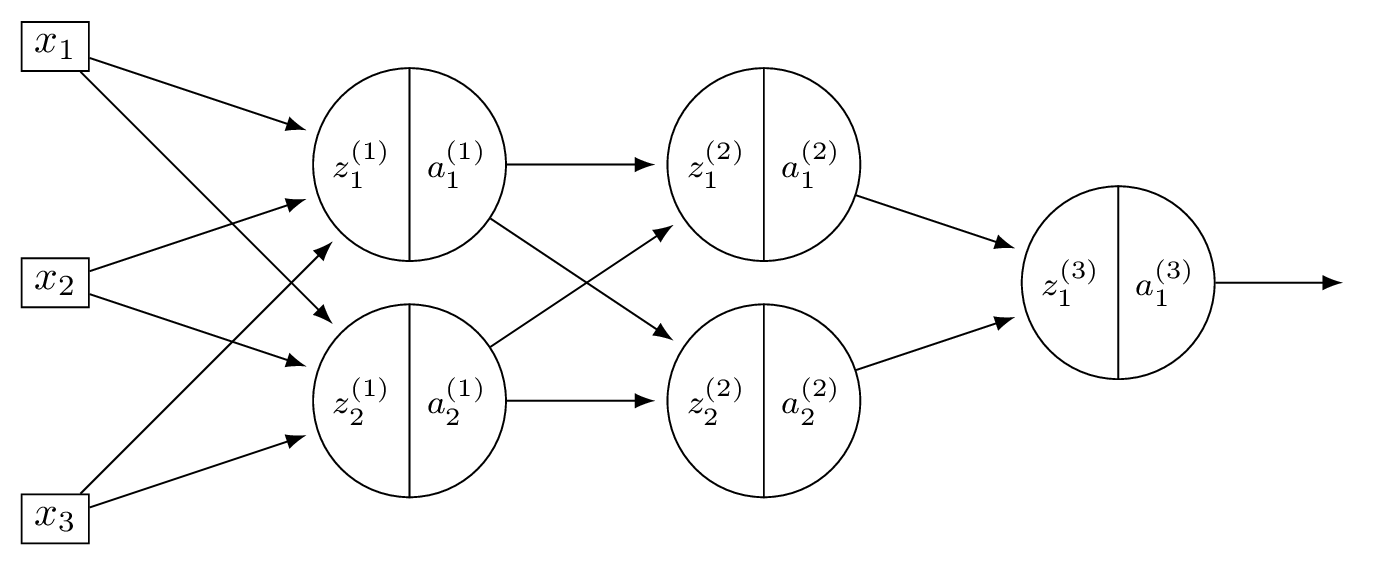

Consider a neural network \(H(\vec x)\) shown below:

Let the weights of the network be:

Assume that all hidden nodes use ReLU activation functions, that the output node uses a linear activation, and that all biases are zero.

Suppose \(\vec x = (2, 0, -2)^T\).

Part 1)

What is \(a_1^{(1)}\)?

Solution

\(z_1^{(1)} = 3(2) + (-1)(0) + (-1)(-2) = 8\), so \(a_1^{(1)} = \text{ReLU}(8) = 8\).

Part 2)

What is \(a_2^{(2)}\)?

Solution

\(z_2^{(1)} = 2(2) + 2(0) + 4(-2) = -4\), so \(a_2^{(1)} = \text{ReLU}(-4) = 0\). Now \(\vec a^{(1)} = (8, 0)^T\). Layer 2: \(z_2^{(2)} = 1(8) + 2(0) = 8\), so \(a_2^{(2)} = \text{ReLU}(8) = 8\).

Part 3)

What is \(H(\vec x)\)?

Solution

\(z_1^{(2)} = 5(8) + 1(0) = 40\), \(a_1^{(2)} = 40\). So \(\vec a^{(2)} = (40, 8)^T\). \(H(\vec x) = 2(40) + (-3)(8) = 56\).

Problem #135

Tags: lecture-11, forward pass, quiz-06, neural networks

Consider a neural network with 2 input nodes, 2 hidden nodes, and 1 output node. There is no non-linear activation function used in the network (i.e., all activations are linear). The input is \((x_1, x_2) = (1, 1)\).

The weights and biases are as follows. Hidden layer: \(W^{(1)} = \begin{pmatrix} 1 & 1 \\ 1 & 1 \end{pmatrix}\), \(\vec b^{(1)} = (-1, -1)^T\). Output layer: \(\vec w^{(2)} = (-1, -1)^T\), \(b^{(2)} = -1\).

What is the output of this neural network?

Solution

Hidden node 1: \(\psi_1 = 1(1) + 1(1) - 1 = 1\).

Hidden node 2: \(\psi_2 = 1(1) + 1(1) - 1 = 1\).

Output: \(H = (-1)(1) + (-1)(1) - 1 = -3\).